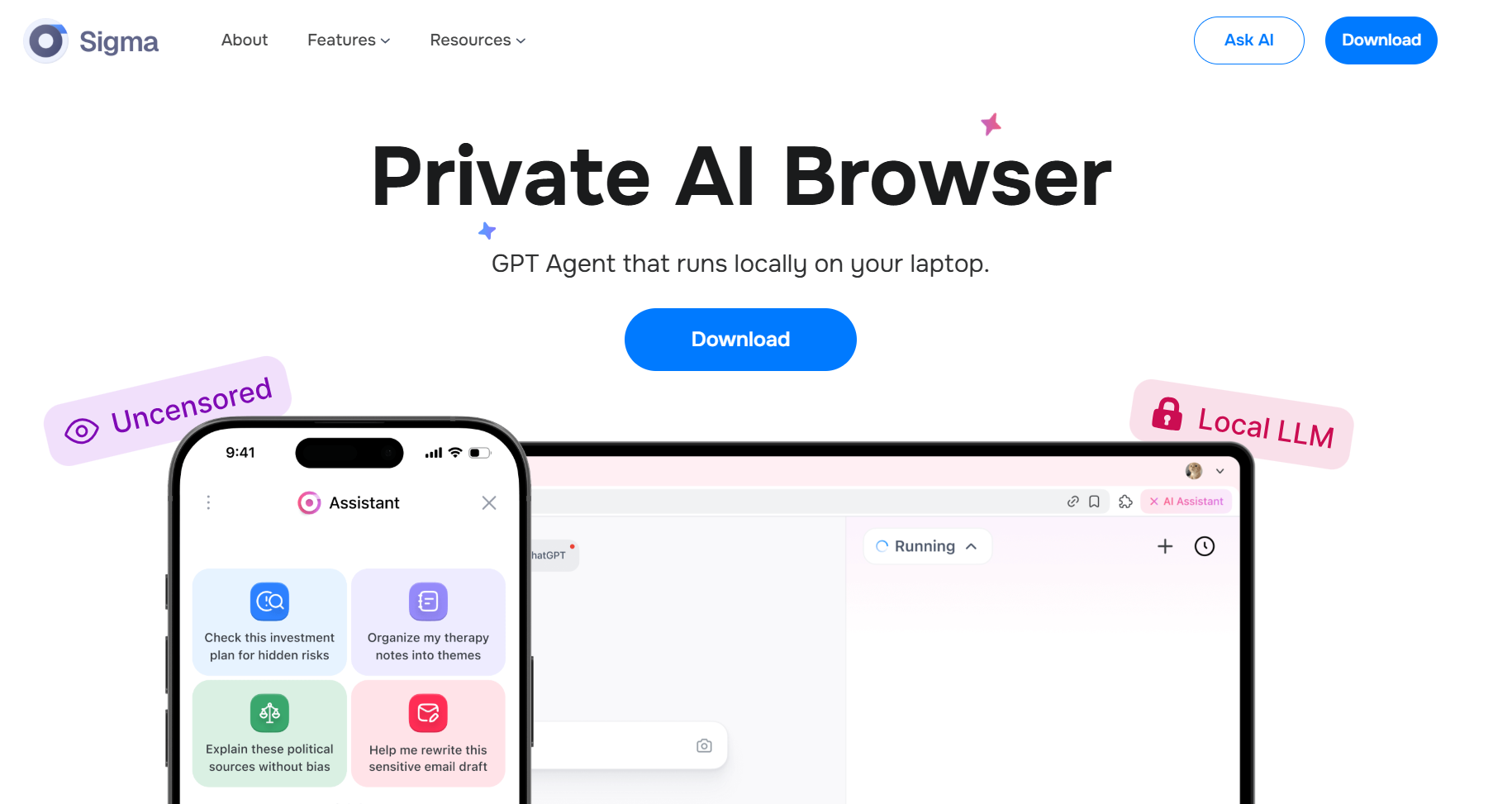

Sigma Browser on Friday announced the launch of its privacy-focused web browser, which includes an AI model that runs locally and therefore does not send data to the cloud.

Many browser developers are joining the AI trend and making built-in AI models part of the primary user experience. Good examples of this are the Gemini in Google Chrome, which offers a native AI experience, and the AI models integrated into Mozilla Firefox. In addition, companies developing large AI models already have their own dedicated AI-native browsers, such as Perplexity’s AI Comet and OpenAI’s PBC Atlas browser.

These browsers route users’ questions and queries to AI systems running in the cloud, which provide answers and generative content.

The Sigma Eclipse browser, on the other hand, contains a large language model that runs locally on the computer, works offline and stores all the user’s data, queries and chats completely locally. The company says this approach aims to eliminate hidden behavior or backdoors that could alter responses or leak user information through third-party services.

“AI has become incredibly efficient, but also centralized and expensive.says Nick Trenkler, co-founder of Sigma.We believe that users don’t have to give up their privacy or pay cloud fees to access advanced AI.”

According to the company, Eclipse’s built-in LLM model is unfiltered and contains no ideological or content restrictions, meaning it does not unnecessarily distort responses. This design decision aligns with Sigma’s intent to give users full control over their AI interaction experience, without restrictions on themes or perspectives. The version also includes a PDF processing feature that allows users to analyze and process documents directly on their computers.

Sigma Eclipse is not the first browser to use local LLMs. Back in 2024, the Brave browser added the “bring your own model” feature for Leo’s AI Assistant. This included a simple integration with local LLMs. Although the process requires less technical affinity as it requires the installation of Ollama or another local AI provider.

Local AI models typically require at least 16 to 32 gigabytes of memory to run efficiently, especially models that are around 7 billion parameters in size, depending on hardware requirements. It also requires a relatively new GPU, such as Nvidia’s entry-level RTX 3060, but it is recommended that users use at least an RTX 4090 model. Running larger AI models requires more memory and even more powerful GPUs.

#Sigmas #browser #supports #browsing