Two weeks ago we launched our new tool for crowdsourced commercial value, which answers simple “which of these two players have aggregated, are you preferred?” Ask to create a composite commercial value in our readers. With your help we have registered nearly 900,000 matchups – 897.035, to be precise. Now that the series of commercial value is in the books, it is time to see how the wider Fangraphs public put everyone in line. Today I will go through you how you can open and interpret your results that can be found hereAnd share a few interesting facts about the places where the crowd and I agreed or differed.

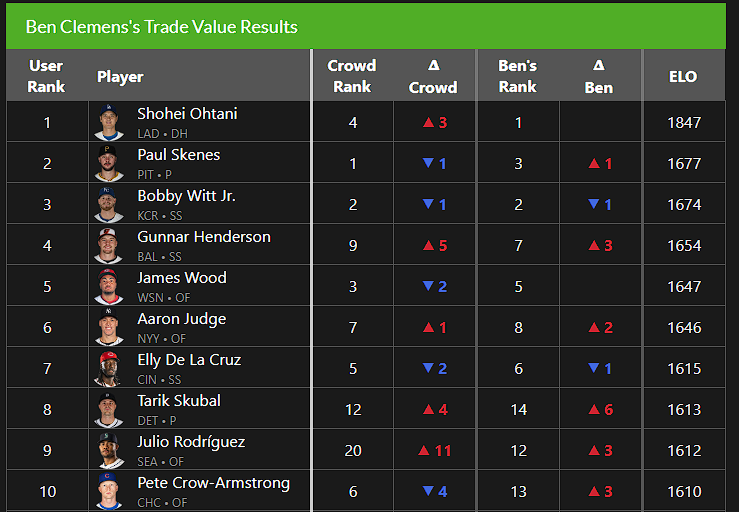

Let’s start with the exercise itself. We have tasted up to 500 results from the submissions of each user and threw them all in one large group of matchups. We ordered those matchups randomly and then used ELO reviews to make the matchups in an ordered list. Then we have closed the random ordering a total of 100 times and on average the results, which Elo’s bias were aimed at more recent matchups. That created a list of the total preferences of the crowd. When you open the link above, the first thing you will see is completely your own results. For example, I came fairly close to matching my official list:

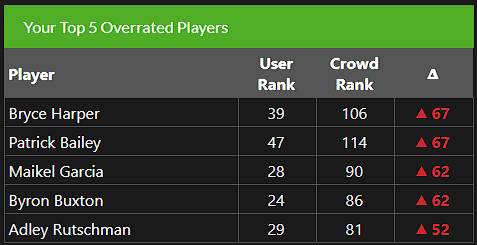

The page shows you how your list (of course, mine is called “Ben Clemens’s Handelswaarderes results”, but your username will have your username) compares both the aggregated list of the crowd and my official. You can also see the players that you liked the most and the least compared to the crowd. Because of the way we designed this tool, the list in the middle can get a bit weird (we give you less of those matchups), so don’t be alerted as for example Maikel Garcia ends in your top 30:

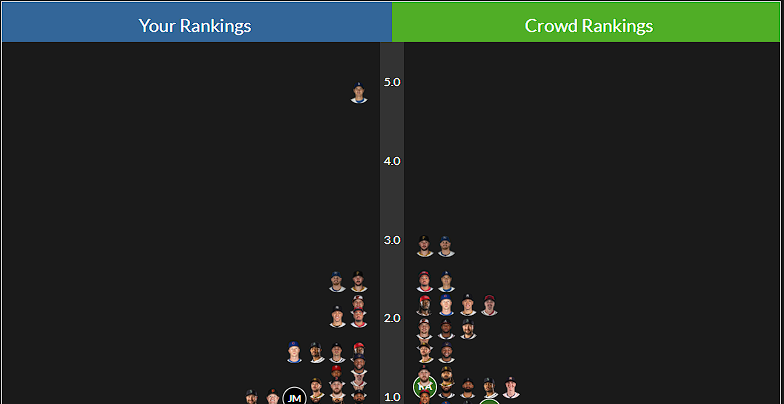

If you liked the Tier system that I have used the soon Value series for the past two years, you are lucky. The “Graphs” option changes your rankings into Z-scores and then uses them to make layers. If you float over the face of a player on that tool, you can see their ELO score based on the matchups you have chosen, as well as their numeric ranking. They will also be emphasized in the crowdsourced layers on the right, so that you can compare your work with that of the group. These work exclusively based on those Z scores; Everyone with a similar score is grouped. If, like me, you were much higher on one player than the rest of the group, you can clearly see that in the Tiering:

Finally, you can look at the full crowdsourced results and a complete breakdown of how my list compared to the crowdsourced list in the tabs on the right side. As I have noticed above, the individual lists in the middle can become a bit funky, but the aggregated crowdsourced list is a sample that leaves the effect. One thing I absolutely enjoyed seeing is that my rankings and the crowdsourced rankings corresponded to nine of the top 10 (I had Cal Raleighwhile you all had Pete Crow-Armstrong).

Now let’s take a look at a few interesting facts to get out of this exercise, starting with a list of the players with whom I was highest compared to the crowd:

Players I liked more than the crowd

Boy, you certainly hate catchers! I wanted to come up with some broad generalizations here, and that is the one who stands out. Will Smith is hardly one of the top 50 commercial values in baseball is only useful if you just don’t appreciate much at all, but that is absolutely logical consistent with the rest of the ranking of the crowd. I thought my ranking of Raleigh – ninth – was already low, and he is the only catcher that we are even close by (11th in the ranking of the crowd). I wrote extensively about how I tried to change my appreciation of catchers, but it is clear that the crowd is far Lower on everyone who bears the tool of ignorance. Likewise, if a player was recently injured, the crowd was not in it. I think that is pretty reasonable. It shows different preferences, but that seems fine. Spencer Strider, Eury Pérez and Yordan Alvarez push from the top 30 and to the bottom of the top 50 because they are currently injured or returning injuries, even if I chose otherwise.

Then I think you will see some general disagreement on stars that are signed for contracts with big money. Corey Seaver? Very unpopular with the crowd. The same applied to Zack Wheeler, who really surprised me, and Byron Buxton, whose contract and injury status are incredibly difficult to dissect. Then there are only a few left behind: two pitchers who are commander about things and play on the west coast in Logan Webb and George Kirby, and then a strong rejection of cheap team control in Jordan Westburg at 105th for the crowd. I am higher on Westburg than consensus in industry, but I think it is fair to say that the crowd is much lower. After all, I showed this list to a bunch of people in the front of the offices, and they didn’t tell me that Westburg should fall from the top 100.

And the plot gets thicker when we look at the list of players where I was lower than the crowd:

Players I liked than the crowd

If I had to generalize here, I would say that the crowd is more of the unknown than me. Roman Anthony in the Top 20, Wyatt Langford and Jacob Wilson in the top 30; These are aggressive ratings for young players with short track records. I wrote extensively about how I think about the appreciation for these guys in each of their blurbs, but the crowd clearly disagrees. There is also a considerable amount of defense and pursuit evaluation visible from my side here: many shortstops and plus outfielders were more popular with our readers than with me. Looking at this list tells me that the crowd loves potential more than me, which is not that surprising. Potential is really fun! One of the most difficult parts of this exercise for me is to reconcile the fact that the point of baseball is to win games with the unwavering feeling that the new is always better. The simplest explanation that I can offer for this list of differences is that we have treated that fact, well, differently. That said, “much higher on Jacob Wilson and much lower on Jordan Westburg” is a difficult position to quadrate, and I think it largely comes down to Wilson as the newer, shiny, more stroke average option.

Finally, here are the highest ranked players in the audience who did not achieve my top 50:

Top crowdsourced players that I have not arranged

The majority of my “just missed” cohort is on that; Mackenzie Gore, Jeremy Peña, Jackson Holliday, Nick Kurtz, Cam Smith and Andy Pages would all have been in my top 60 if I had arranged that deeply. Likewise, all these players are then Juan Soto in the back of the top 50 of the crowd, where relative ratings are compressed. Gore on 35th is different from my ranking (51st), but not by a ton. If I had ended up with one of these players in my final ranking, it would not have been strange; The list of the crowd even looks pretty good. However, I will admit a little confusion about a few inclusions on this specific list. Soto is a great player with a big problem; Having him 24th and then outside the top 100 seems strange to me. Similarly, Spencer Schwellenbach and Cole Ragans are currently on the 60-day IL. I am open to the idea that despite those injuries you could put them on the list, but I have problems coming up with a walk -through that simultaneously pushes those guys on the list and the Strider/Pérez/Alvarez Group Down. I am not saying that it is impossible or something, only that the total preferences of the crowd can ensure a number of strange groups.

I bet that if we did this exercise again today, Kurtz would end much higher. Most voices in these things happen in the first few days. Kurtz had a 138 WRC+ at the All-Star Break, which was when the tool debuted; By the time that voting was closed last Friday, an eight game, 472 WRC+ Stretch had his season line up to an 184 WRC+. I would be to estimate adjusted placement in the mid -1920s, but it is hard to say it for sure. Those damn players keep playing while we try to rank them!

That’s actually it. You have done the hard work to arrange all these players; Now you can play with the results in your heart. I even have a small bonus piece of data: the five nearest matchups between top players. We have instructed our algorithm to set up more matchups between players who were at the top of individual lists, so that we would have more information about who each user prefer the best players in baseball, which means more of those matchups. Even with all that extra volume, these choices splits together in the middle:

Closest matchups between top players

Thanks again for your help in making this new tool useful – we could not have done it without you all. And of course I want to offer a round of Keaton Arneson and Sean Dolinar for designing and developing the Crowdsourced tool and the results page. I hope you enjoyed getting into my shoes for a week.

#Envelop #results #crowdsourced #commercial