Lance Martin

The largest lesson that can be read from 70 years of AI research is that general methods that use the calculation are ultimately the most effective and with a large margin.

Rich sutton, The bitter lesson

The bitter lesson has been learned again and again Many domains From AI research, such as chess, go, speech, vision. Utilizing calculation appears to be the most important and the ‘structure’ that we impose on models often limits their ability to use the growing calculation.

What do we mean by “structure”? Often the structure includes inductive prejudices about how we to expect models to solve problems. Computer vision is a good example. For decades, researchers have designed functions (e.g. Sift And PIG) Based on domain knowledge. But these handmade functions limited models to the patterns we had expected. Scaled as a calculation and data, deep networks that scholars have learned functions directly Van Pixels performed better than methods made by hand.

With this in mind, Hyung Chung’s (OpenAI) won conversation About his research approach is interesting:

Add structures required for the given level of calculation and available data. Remove them later, because these shortcuts will be a further improvement in the bottleneck.

The bitter lesson in AI Engineering

The bitter lessons also apply to AI EngineeringThe vessel of construction applications on top of rapidly improving models. As an example, Boris (Lead on Claude -code) named That the bitter lesson strongly influenced its approach. And I have discovered that Hyung’s speech offers some useful lessons for AI engineering. Below I will illustrate this with a story about building open-deep research.

Add structure

I had frustrating experiences with agents in 2023. It was difficult to have LLMs call tools in a reliable way and context windows were small. At the beginning of 2024 I became interested in workflows. Instead of calling an LLM autonomous tools in a loop, Workflows LLM evokes in pre -defined code paths.

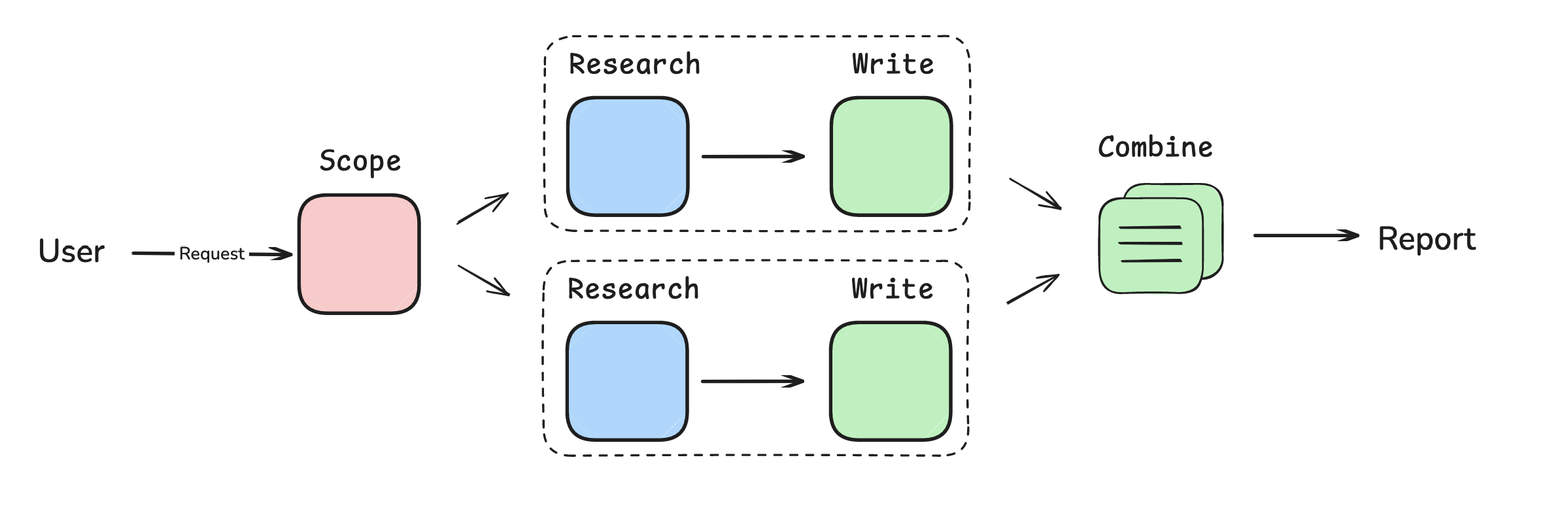

At the end of 2024, me issued An orchestrator worker Workflow for web research. The orchestrator was an LLM call that accepted a user’s request and returned a list of reports to write. A set of employees in parallel with research and writing all reports. Then I just combined them together.

So what was the “structure”? I have imposed a few assumptions on how LLMS should perform quickly, reliable research: it must fall apart in a series of reports, research and write them in parallel to make it quickly and prevent toolbid to make it more reliable.

Bottlenecks

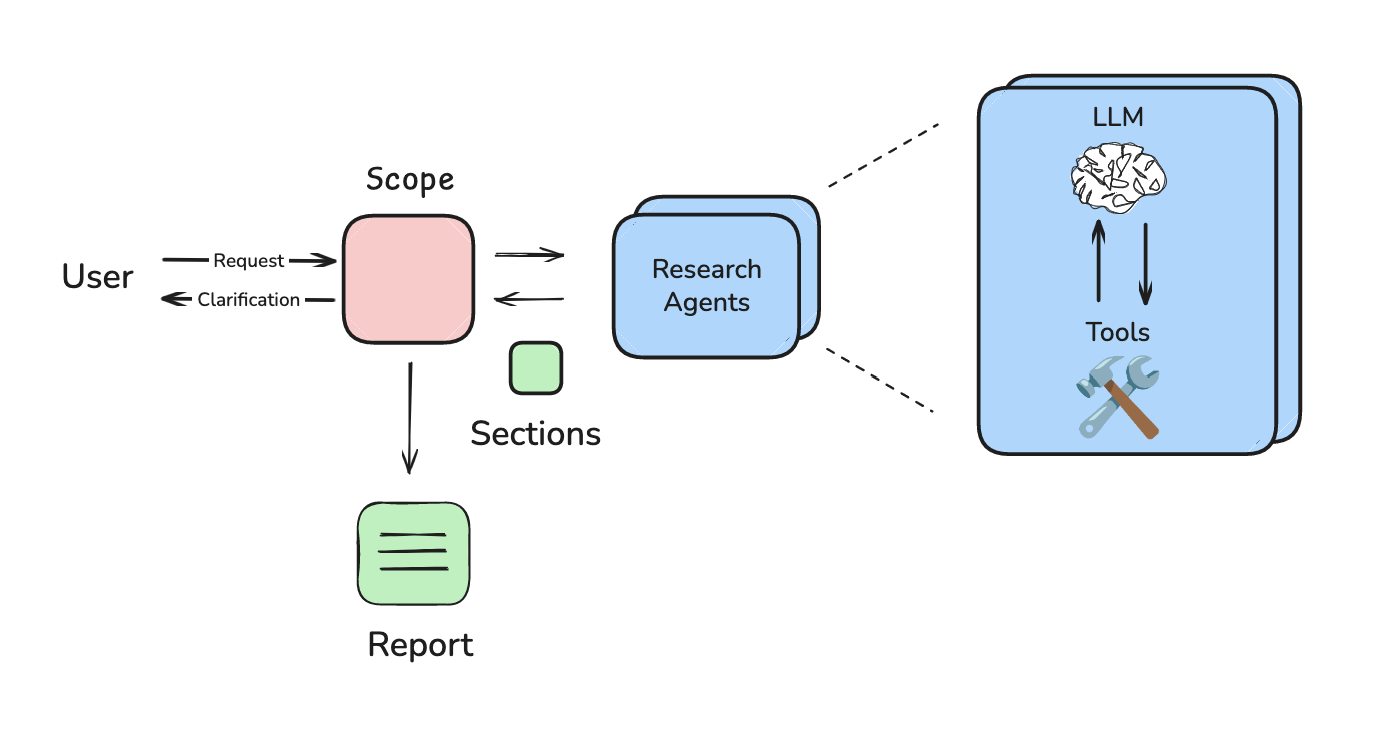

Things started to shift when 2024 ended. Calling tool was getting better. Against Winter 2025, MCP had won a considerable momentum and it was clear that agents were Good suitable into the research task. But the structure that I have imposed prevented me from making use of these improvements.

I did not use a tool group, so I could not benefit from the growing MCP ecosystem. The workflow always dissolved the request in reports, a rigid research strategy that was not suitable for all cases. The reports were sometimes incoherent because I forced employees to write sections in parallel.

Remove structure

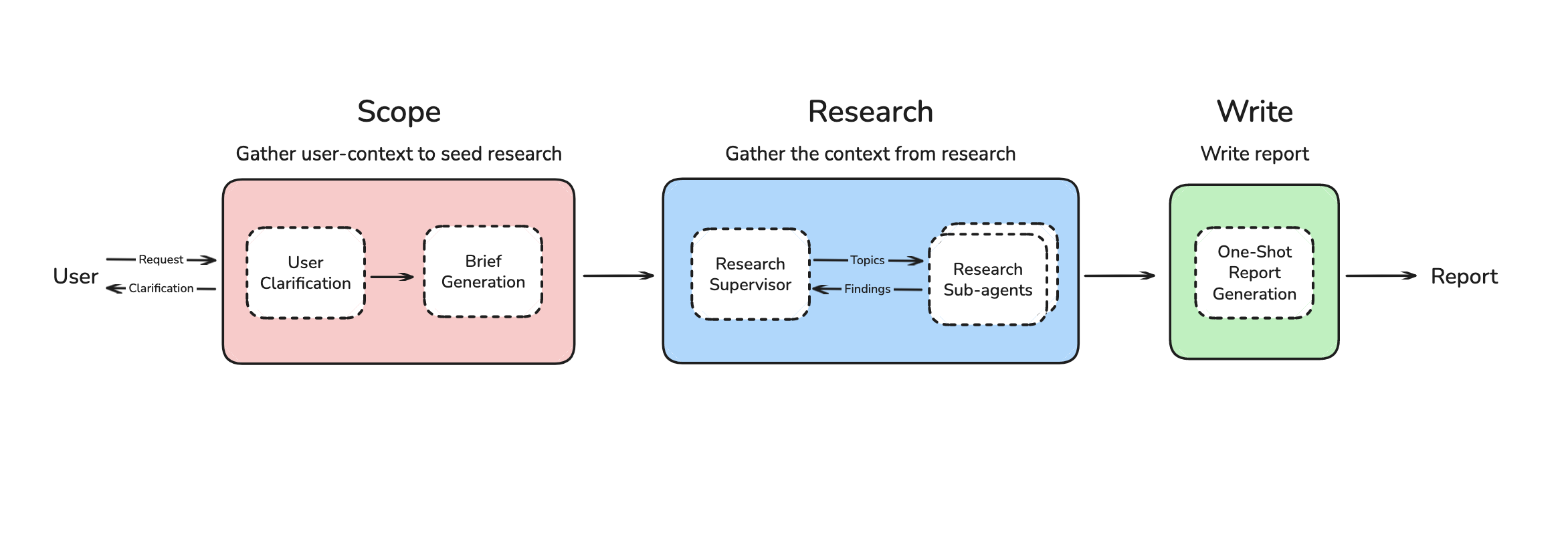

I moved to a multi-agent system, with which I could use aids and the system was flexibly planning the research strategy. But I designed it in such a way that every sub -agent still wrote his own report section. This came across a problem that Walden Yan van van Cognition Called: multi-agent systems are difficult because the subtenter often do not communicate effectively. Reports were still Disjoint because my sub-agent’s parallel sections wrote.

This is one of the most important points of Hyung’s speech: we often remove All the structure that we add while we update our methods. In my case I moved to an agent, but I still forced every agent to write part of the report in parallel.

I went to write a final step. The system could now flexibly plan the research strategy, use Collect multi-agent contextAnd write the report in One-shot based on the collected context. It scores a 43.5 Deep research bench (Top 10), which is not bad for a small open source effort (and close to agents who use RL Or benefit from much greater efforts).

Lesson

AI Engineering can benefit from some simple lessons from Chung’s conversation:

- Understand your application structure

- Re -evaluate the structure as models improve

- Make it easy to remove the structure

At the first point, consider which LLM performance arrangements are baked in the design of your application. For my first workflow I avoided tools because it was not reliable (at that time). This was no longer true a few months later! Jared Kaplan (co-founder of anthropic) Recently it has lit that it can even be useful to “build things that are not yet working completely” because the models will catch up (often fast).

At the second point I was a bit slow to re -evaluate my assumptions as a tool improving. And at the last point I agree Walden (cognition) And Harrison (Langchain) That agentab strives can be the risk because they can make it more difficult to remove the structure. I still use a framework (long graph) for its useful general functions (eg checkpointing), but keep up low -level Building blocks (eg nodes and edges) that I can easily (re) configure.

The design philosophy for building AI applications is still in its infancy. Still, as Hyung said, it is useful to concentrate on the driving force that we can Predict: Model gets much better. Designing AI applications to benefit from this is probably the most important thing.

Credits

Thanks Vadym Barda For first evals, MCP support and useful discussion. Thanks Nick Huang For work on the multi-agent implementation and deep research bank Evals.

#Learn #bitter #lesson