While online discussions obsess over whether ChatGPT means the end of Google, websites are losing revenue because of a much more real and pressing problem: some of their most valuable pages are invisible to the systems that matter.

Because while the bots have changed, the game has not. The content of your website must be crawlable.

Between May 2024 and May 2025, AI crawler traffic increased by 96%with GPTBot’s share increasing from 5% to 30%. But this growth doesn’t replace traditional search traffic.

Semrush’s analysis of 260 billion rows of clickstream data showed that people who start using ChatGPT maintain their Google search behavior. They don’t switch; they expand.

This means that corporate sites must comply both traditional crawlers And AI systems, while maintaining the same crawl budget as before.

The dilemma: crawl volume versus revenue impact

Many companies get crawlability wrong because they focus on what we can easily measure (the total number of pages crawled) rather than what actually drives revenue (which pages are crawled).

When Cloudflare analyzed the behavior of AI crawlers, they discovered a disturbing inefficiency. For example, for every visitor, Claude from Anthropic refers back to websites, ClaudeBot searches tens of thousands of pages. This imbalanced crawl-to-referral ratio reveals a fundamental asymmetry of modern searches: massive consumption, minimal traffic return.

That’s why it’s imperative that crawl budgets are effectively directed to your most valuable pages. In many cases, the problem is not that there are too many pages. It’s about the wrong pages eating up your crawl budget.

The PAVE framework: prioritizing revenue

The PAVE framework helps manage the crawlability of both search channels. It provides four dimensions that determine whether a page deserves crawl budget:

- P – Potential: Does this page have realistic ranking or referral potential? Not all pages need to be crawled. If a page isn’t optimized for conversions, offers limited content, or has minimal ranking potential, you’re wasting crawl budget that could be going to value-generating pages.

- A – Authority: The marks are known to Google, but as shown in Semrush Enterprise’s AI Visibility IndexIf your content doesn’t contain enough authority signals – such as clear EEAT, domain credibility – AI bots will skip these too.

- V – Value: How much unique, synthesizable information exists per crawl request? Take pages that require JavaScript rendering 9x longer to crawl then static HTML. And remember: JavaScript is also skipped by AI crawlers.

- E – Evolution: How often does this page change in meaningful ways? Crawl demand increases for pages that are regularly updated with valuable content. Static pages are automatically given a lower priority.

Server-side rendering is a revenue multiplier

Sites that use a lot of JavaScript pay a display tax of nine times their crawl budget in Google. And most AI crawlers don’t run JavaScript. They take raw HTML and move on.

If you rely on client-side rendering (CSR), which collects content in the browser after JavaScript runs, you’re hurting your crawl budget.

Server-side rendering (SSR) turns the equation completely around.

With SSR, your web server pre-builds the entire HTML before sending it to browsers or bots. No JavaScript execution is required to access the main content. The bot is needed for the first request. Product names, prices and descriptions are all immediately visible and indexable.

But this is where SSR becomes a real revenue multiplier: this extra speed not only helps bots, but also dramatically improves conversion rates.

Deloitte’s analysis with Google found that just a 0.1 second improvement in mobile load time delivers:

- 8.4% increase in store conversions

- 10.1% increase in travel conversions

- 9.2% increase in average retail order value

SSR makes pages load faster for users and bots because the server does the heavy lifting once and then presents the pre-rendered result to everyone. No redundant processing on the client side. No delays in JavaScript execution. Just fast, searchable, convertible pages.

For enterprise sites with millions of pages, SSR can be a key factor in whether bots and users actually see and convert on your valuable content.

The disconnected data gap

Many companies are flying blind because of disconnected data.

- Crawl logs are in one system.

- Your SEO ranking tracking lives in another.

- Your AI search monitoring in a third party.

This makes it almost impossible to definitively answer the question, “What crawl issues are currently costing us revenue?”

This fragmentation increases costs when making decisions without complete information. Every day you work with data in silos, you run the risk of optimizing for the wrong priorities.

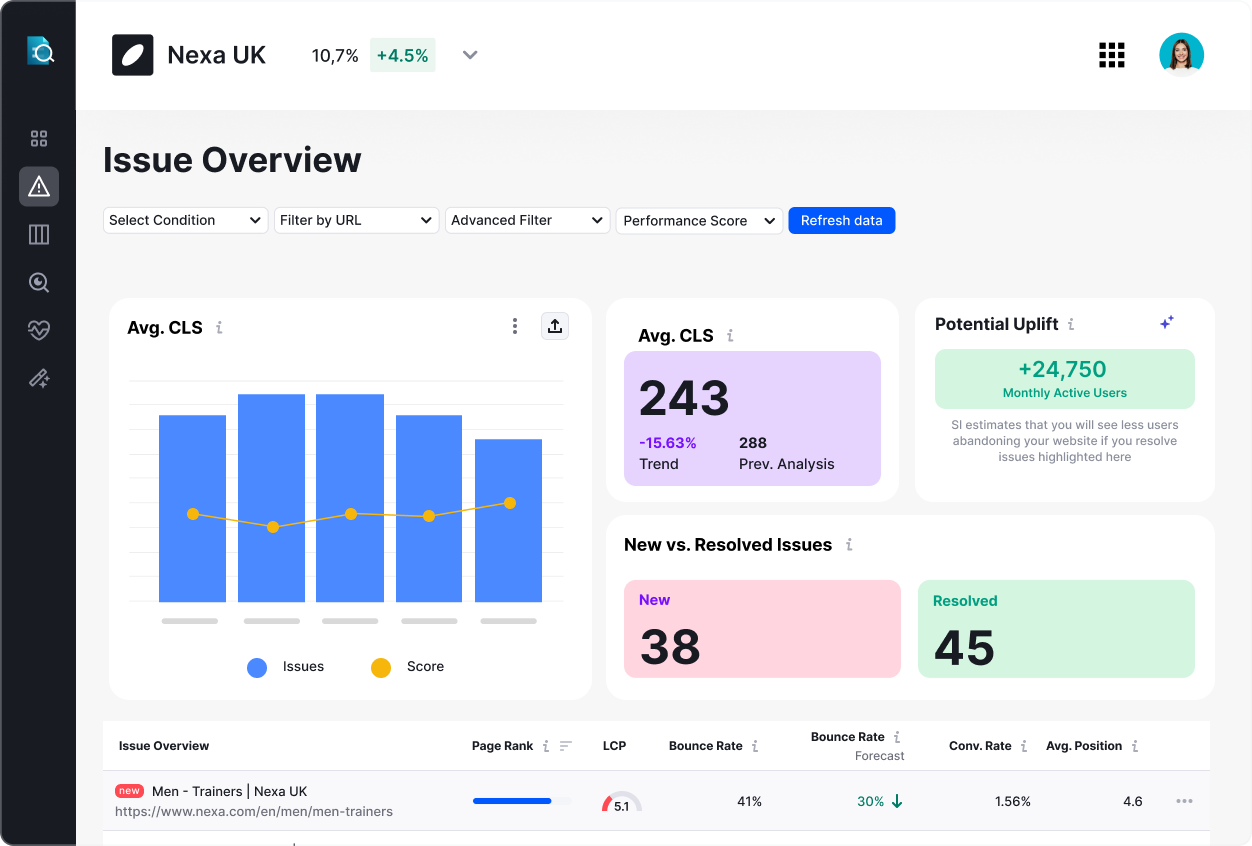

The companies that solve crawlability and manage the health of their sites at scale aren’t just collecting more data. They unite crawl intelligence with search performance data to create a complete picture.

When teams can segment crawl data by business units, compare pre- and post-deployment performance side-by-side, and correlate crawl status with actual search visibility, you transform the crawl budget from a technical mystery to a strategic lever.

1. Perform a crawl audit using the PAVE framework

Use the Google Search Console Crawl Statistics report in addition to log file analysis to identify which URLs are consuming the most crawl budget. But this is where most businesses hit a wall: Google Search Console isn’t built for complex, multi-regional sites with millions of pages.

This is where scalable site health management becomes critical. Global teams need the ability to segment crawl data by region, product lines, or languages to see exactly which parts of your website are saving budget instead of driving conversions. Precision segmentation capabilities that Semrush Enterprise site information makes possible.

Once you have an overview, apply the PAVE framework: If a page scores low on all four dimensions, consider blocking it from crawls or consolidating it with other content.

Targeted optimization through improving internal linking, fixing page depth issues, and updating sitemaps to only contain indexable URLs can also pay huge dividends.

2. Implement continuous monitoring, not periodic audits

Most companies conduct quarterly or annual audits, take a snapshot and call it a day.

But crawl budget and broader site health issues don’t wait for your audit schedule. A deployment on Tuesday may leave important pages quietly invisible on Wednesday, and you won’t discover them until your next review. After weeks of loss of turnover.

The solution is to implement monitoring that catches problems before they worsen. When you can align audits with deployments, track your site historically, and compare releases or environments side-by-side, you move from reactive fire drills to a proactive revenue protection system.

3. Systematically build your AI authority

AI search works in phases. When users research general topics (“best waterproof hiking boots”), AI synthesizes content from review sites and comparison content. But when users research specific brands or products (“are Salomon X Ultra waterproof and how much do they cost?”), AI completely changes its research approach.

Your official website becomes the primary source. This is the authority game, and most enterprises lose out by neglecting their fundamental information architecture.

Here’s a quick checklist:

- Make sure your product descriptions are factual, complete, and non-guaranteed (no JavaScript-heavy content)

- Clearly state essential information, such as prices, in static HTML

- Use structured data formatting for technical specifications

- Add feature comparisons to your domain and don’t rely on third-party sites

Visibility is profitability

Your crawl budget problem is really a revenue recognition problem disguised as a technical problem.

Every day that valuable pages are invisible is a day of lost competitiveness, missed conversions and even greater lost revenue.

With search crawl traffic increasing and ChatGPT now reporting more than 700 million daily usersthe stakes have never been higher.

The winners won’t be those with the most pages or the most advanced content, but those who optimize the site’s health so that bots reach the highest-value pages first.For companies managing millions of pages across multiple regions, consider how unified crawl intelligence (the combination of deep crawl data with search performance metrics) can transform your site health management from a technical issue to a revenue protection system. Learn more about Semrush Enterprise site information.

The opinions expressed in this article are those of the sponsor. MarTech neither confirms nor disputes the conclusions presented above.

#Invisible #pages #lost #revenue #crawlability #big #risk #MarTech