Introduction

One of our goals is to enable customer success with inventory discovery, idea generation, watchlist management, and the purchasing journey. We have trained the broker to help not only seasoned investors, but also those who are just starting to invest. We want to make it easy, no matter your experience level. The agent exposes some of our search APIs in ways that are faster to develop than a full user interface, while also giving the user more granular controls; which is great for experimenting. For example, you can request Majestic data integration through the agent, but this isn’t something we’ve prioritized in the UI yet.

Natural conversation flow

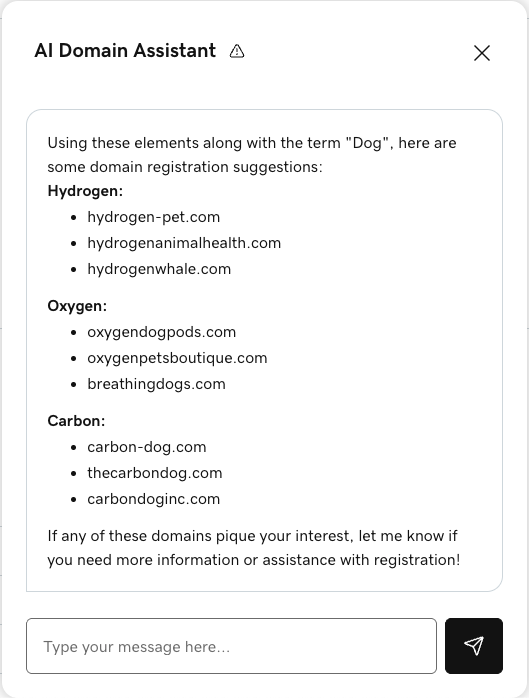

One of the agent concepts that originally opened our minds was chat as a tool. This gives our agent the freedom to decide what content to give the user and when. Although simple, this idea makes the conversation feel much more natural. Instead of ping-ponging back and forth, the agent can send the user multiple messages, making them friendlier and more informative in an incremental way. The following is an excerpt highlighting the agent’s level of communication and generative discovery capabilities:

If you think about human-to-human conversation, there is non-sequential back and forth. You will see that the agent first responds to the elements it has generated. It could have asked for confirmation, but decided to go straight to the results (of call signals). This gives the user something to think about and collaborate with. In the screenshots there is no notion of time, but there are natural pauses between each message to break up the amount of data for the user.

Tool definition

We take back-and-forth communication into account in our agent and system design. First, teach the agent the tool definitions (how to send a message instead of asking a question and waiting for a response). Depending on how you structure your project, you can achieve this as one tool with actions or as multiple tools. Our team discussed how this approach could be improved to give the agent more flexibility in future iterations. For example, you can let the agent decide that he wants to provide a drop-down list and a series of checkboxes to best collect user input.

Below is a simple tool definition that allows an agent to handle the communication flow:

name: Chat

id: chat

description: |-

This tool should be used for communication with the user. For the `action`:

* `input`: is used to request input from the user.

* `output`: used to send a message to the user.

parameters:

properties:

action:

description: Action is the chat action to take.

enum:

- input

- output

type: string

body:

oneOf:

- "$ref": "#/$defs/InformArguments"

- "$ref": "#/$defs/RequestArguments"

required:

- action

- body

type: object

"$defs":

OutputArguments:

description: |-

Sends a message to the user. Use this tool to convey information back to the user

or to prompt them for further action.

properties:

message:

type: string

required:

- message

type: object

InputArguments:

description: |-

Requests input from the user and pauses agent execution. Use this tool after you

have asked the user a question and need a response before proceeding.

properties: { }

type: objectThe tool uses two actions: an output and an input action. The output action sends messages to the user to convey information or prompt the user to take action. The input action requests input from the user and pauses the agent’s execution until the user responds.

System design

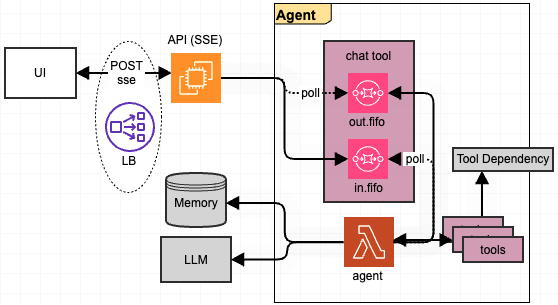

After the agent decides how to respond, it must handle the streaming response for one request. We have implemented Events sent by the server (SSE) to cover this pattern. SSE offers the most stability with a good mix of system complexity and user experience. We isolate this complexity between our API and the user interface. We don’t need to worry about streaming to the agent or from the LLM, because this approach represents these events as multiple tool calls. We experimented with SSE, WebSockets and HTTP POST requests, and the ability to turn them on and off, proves that our design is flexible and robust. The following image shows the basic system architecture:

Our agent operates as an independent entity, while an API is at the front of the agent that manages the SSE interface and its connections. As a result, the agent himself is stateless. We pair it with an LLM provider and a GoDaddy memory service. For a given conversation, we receive the following message, look up the entire conversation, send a certain subset of the conversation to the LLM, and finish running tools. Chat is also a tool, so this acted as an infinite loop, pausing to wait for user input. We started this project before MCP was widespread, and as a result our tools interface directly with APIs. Converting to using MCP where it makes sense is something we are catching up on. Likewise, we can easily connect an agent-to-agent API interface for future extensibility.

Fast security

Hijacking agents is a major concern for us. Hijacking allows fraudulent messages to be inserted into a conversation to maintain the facade of proper functioning, while also skimming data or performing other nefarious actions. We don’t want our agent to be used as a tool to attack other systems or people. Our prompt security and safety measures will help limit such attempts. And our rapid safety monitoring will also provide us with insights to learn and improve our mitigation strategies.

We spent a lot of time talking to our agent, trying to get him to take inappropriate actions. This can range from providing information unrelated to the topics of domain investments, or worse, exposing parts of GoDaddy’s inner workings. LLMs are just as susceptible to social engineering as we are. Imposing tactics, such as a sense of urgency, can deceive him as easily as a human. We found that this was explicit in what the agent did can doing is generally not enough. Instead, we gave the officer information can’t parts, spread across the system prompt and tool definitions.

In our system prompt we have defined Security And Privacy sections with high-level aspects related to focus, technical privacy, tool privacy and call redirection. This was enough to monitor the agent during a call, even if the user started to get pushy. Furthermore, in our tool definitions we have also defined a Security section to remind the officer what not to expose and possibly how to respond. While it’s impossible to perfectly capture a freeform chat agent, attention and dedication can go a long way.

Finally, we have the idea of quick safety, where we score prompts based on topics like explicitness, sexuality, and even personal information. High enough scores lead to flags so we can then explore and learn. That data will show us if our agent is holding up, or if we may need to further fine-tune our prompts for better protection, or if a user has attempted something malicious and needs investigation for possible suspension. This is all data that we collect and that we will process and experiment with.

Conclusion

Part of the challenge of agent offering is making it feel natural. The more freedom you can give an agent, the more useful he can become. Achieving this through chat is at the forefront of the experience. Teaching an agent to communicate freely is one way we achieve this. We will always discover quirks and edge cases, but careful, rapid tuning will keep these to a minimum. Moreover, the attention to timely security ensures that we can keep a close eye on if the agent performs unexpected actions. By keeping these concepts in mind in our architecture, we can easily discover, iterate, and experiment.

In the future, we plan to add more capabilities to our agent, making it even easier to perform auction-related activities, including automating some flows.

#GoDaddy #Auctions #Building #Acquisition #Agent